/

Technical SEO

/

0 min read

Crawlability: Understanding Its Importance

If you're responsible for managing a website, you've probably heard the term "crawlability" before. But what does it mean, and why is it so important for (technical) SEO?

In this blog post, we'll dive deep into the concept of crawlability and explore how it impacts the performance of a website in search results. We'll also provide practical tips for improving a website's crawlability and avoiding common crawl errors.

What is Crawlability in SEO?

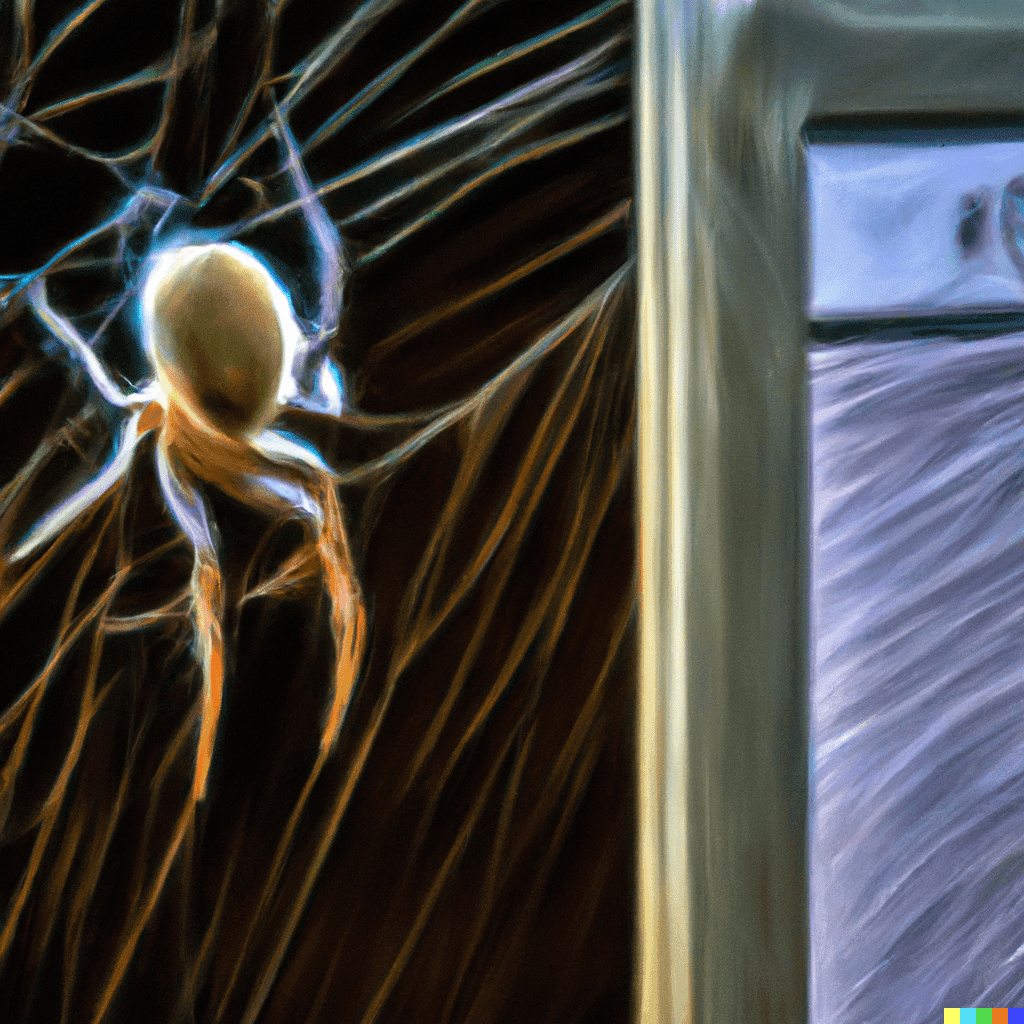

Crawlability refers to the ability of search engines to discover and access the pages on a website. When a search engine's crawlers, also known as spiders or bots, visit a website, they follow links on the site to discover new pages and index them in their databases. This process is called crawling.

Crawlability is an important factor in SEO because it allows search engines to understand a website's content and determine its relevance to users. If a website is not crawlable, search engines will not be able to access and index its pages, which will significantly impact its visibility and ranking in search results.

In order to optimize a website's crawlability, it's important to ensure that it is structured in a clear and logical manner, that it does not use technical elements that can be difficult for search engines to interpret, and that it does not contain crawl errors that prevent search engines from accessing its pages.

By improving a website's crawlability, you can increase its chances of ranking well in search results and attracting more organic traffic.

Factors that Impact Crawlability

There are several factors that can impact a website's crawlability, including its structure, the use of technical elements like JavaScript and Flash, and the presence of crawl errors. Let's take a closer look at each of these:

1. Website Structure

A well-structured website makes it easier for search engines to crawl and understand its content. This includes using a clear hierarchy of header tags (H1, H2, etc.), organizing content into logical sections, and using internal links to connect related pages.

2. Technical Elements

Search engines may have difficulty crawling websites that use technical elements like JavaScript and Flash, as these technologies can be difficult for bots to interpret. It's important to ensure that these elements are used sparingly and that they do not prevent search engines from accessing the content on a website.

3. Crawl Errors

Crawl errors occur when a search engine's crawlers encounter problems while trying to access a website's pages. These errors can be caused by broken links, outdated URLs, or other issues. It's important to regularly monitor and fix crawl errors to ensure that search engines can access and index all of a website's pages.

Tips for Improving Crawlability

Here are some practical tips for improving a website's crawlability and ensuring that it ranks well in search results:

1. Use a clear and logical website structure

Organize your content into logical sections and use header tags to signal the hierarchy of your content. This will make it easier for search engines to understand the structure and purpose of your pages.

2. Avoid using technical elements like JavaScript and Flash

While these technologies can be useful, they can also make it difficult for search engines to crawl and understand your content. Use them sparingly and ensure that they do not block search engines from accessing your content.

3. Fix crawl errors

Regularly monitor your website for crawl errors and fix them as soon as possible. You can use tools like Google Search Console to identify crawl errors and see how they are impacting your website's performance in search results.

4. Use XML sitemaps

An XML sitemap is a file that lists all of the pages on a website and provides information about each page, including when it was last updated and how often it is changed. XML sitemaps can help search engines discover and crawl new and updated pages on a website.

5. Use internal linking

Internal linking is the practice of linking to other pages on your own website. This helps search engines understand the relationship between different pages on your site and can improve the crawlability of your website.

6. Use external linking

In addition to internal linking, it's also important to use external linking to provide value and credibility to your content. By linking to reputable sources, you can demonstrate the credibility of your content and improve its authority in the eyes of search engines.

Example of How Search Engines Crawls Websites

Search Engines uses a network of computers, called crawlers or spiders, to discover and index the billions of pages on the internet. When a crawler visits a website, it follows links on the site to discover new pages and add them to its index.

The crawl process begins with a list of web addresses, known as the seed list. The crawlers start with these seed URLs and follow the links on these pages to discover new URLs. As they crawl these pages, they extract the content and metadata of the pages and add them to their index.

The crawl process is ongoing, as Search Engines crawlers continuously discover new pages and update their index with the most current information. This allows Search Engines to provide the most relevant and up-to-date search results to users.

Here is a simple example of how a crawler might crawl a website:

import requests

from bs4 import BeautifulSoup

def crawl(url):

# Make a request to the website

response = requests.get(url)

# Parse the HTML of the website

soup = BeautifulSoup(response.text, 'html.parser')

# Extract all links on the page

links = soup.find_all('a')

# Follow each link and crawl the linked page

for link in links:

linked_url = link.get('href')

crawl(linked_url)

# Start the crawl with the seed URL

crawl('https://www.example.com/')This script makes a request to the specified URL, parses the HTML of the page, and extracts all of the links on the page. It then follows each link and crawls the linked page, repeating the process for each page it discovers.

Of course, real-world crawling processes are much more complex, as they must account for factors like crawl budget, crawl rate limits, and robot.txt files. However, this simple example illustrates the basic process of how a crawler might discover and index the pages on a website.

Crawlability is an important aspect of SEO, as it determines how easily search engines can discover and access the pages on a website. By following the tips outlined in this blog post, you can improve the crawlability of your website and increase its chances of ranking well in search results. Don't forget to regularly monitor and fix crawl errors, use XML sitemaps, and utilize internal and external linking to improve the crawlability of your website.

CEO & Founder

Let us show you an SEO strategy that can take you to the next level

A brief meeting, where we review your position in the market and present the opportunities.

Let us show you an SEO strategy that can take you to the next level

A brief meeting, where we review your position in the market and present the opportunities.